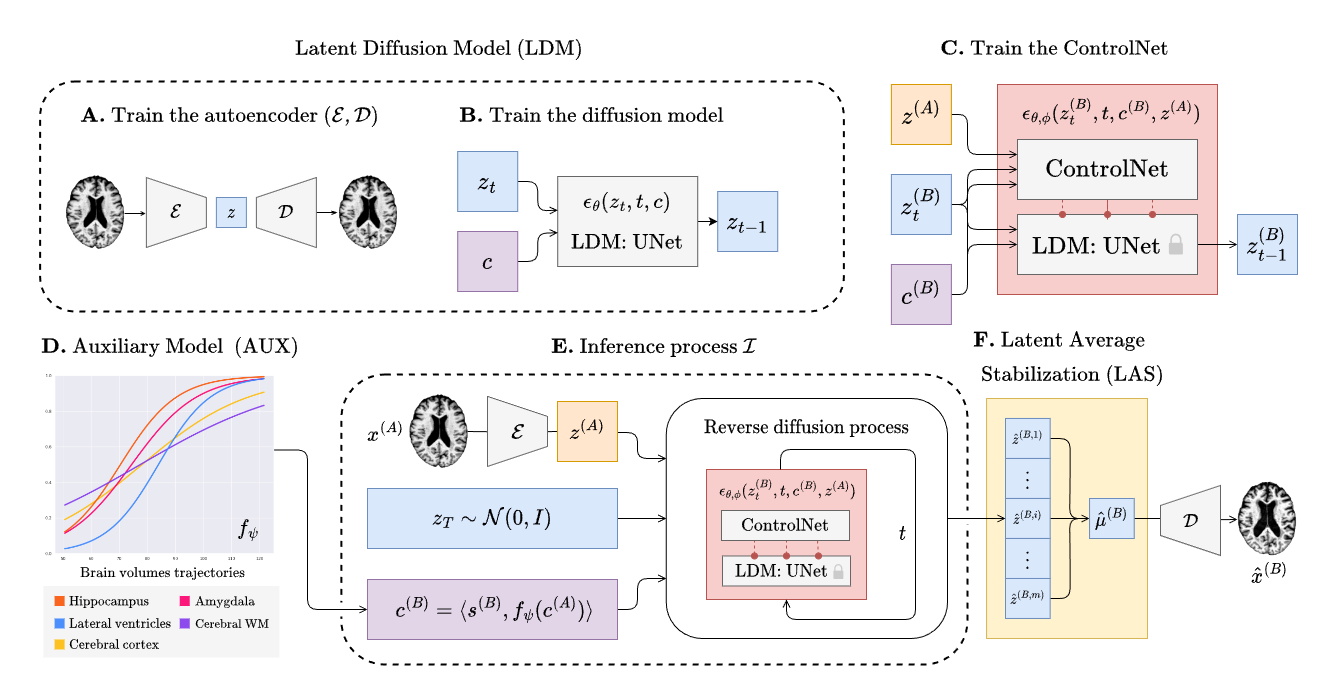

Enhancing Spatiotemporal Disease Progression Models via Latent Diffusion and Prior Knowledge

Lemuel Puglisi, Daniel C. Alexander, Daniele Ravì

https://github.com/user-attachments/assets/28ad3693-5e3e-4f6e-9bbc-485424fbbee2

Installation • Training • CLI Application • Cite

NEWS

- 🎉 BrLP has been awarded the runner-up position for the Media Best Paper Award at MICCAI 2025!

- 🎉 A new paper from Vanderbilt University has replicated our results on the BLSA dataset!

- 🆕 The short guide on using the BrLP CLI is out!

- 🎉 BrLP has been nominated and shortlisted for the MICCAI Best Paper Award! (top <1%)

- 🎉 BrLP has been early-accepted and selected for oral presentation at MICCAI 2024 (top 4%)!

Table of Contents

Installation

Download the repository, cd into the project folder and install the brlp package:

pip install -e .Data preparation

Check out our document on Data preparation and study reproducibility. This file will guide you in organizing your data and creating the required CSV files to run the training pipelines.

Training

Training BrLP has 3 main phases that will be discribed in the subsequent sections. Every training (except for the auxiliary model) can be monitored using tensorboard as follows:

tensorboard --logdir runsTrain the autoencoder

Follow the commands below to train the autoencoder.

# Create an output and a cache directory

mkdir ae_output ae_cacheRun the training script

python scripts/training/train_autoencoder.py \

--dataset_csv /path/to/A.csv \

--cache_dir ./ae_cache \

--output_dir ./ae_outputThen extract the latents from your MRI data:

python scripts/prepare/extract_latents.py \

--dataset_csv /path/to/A.csv \

--aekl_ckpt ae_output/autoencoder-ep-XXX.pthReplace XXX to select the autoencoder checkpoints of your choice.

Train the UNet

Follow the commands below to train the diffusion UNet. Replace XXX to select the autoencoder checkpoints of your choice.

# Create an output and a cache directory:

mkdir unet_output unet_cacheRun the training script

python scripts/training/train_diffusion_unet.py \

--dataset_csv /path/to/A.csv \

--cache_dir unet_cache \

--output_dir unet_output \

--aekl_ckpt ae_output/autoencoder-ep-XXX.pthTrain the ControlNet

Follow the commands below to train the ControlNet. Replace XXX to select the autoencoder and UNet checkpoints of your choice.

# Create an output and a cache directory:

mkdir cnet_output cnet_cacheRun the training script

python scripts/training/train_controlnet.py \

--dataset_csv /path/to/B.csv \

--cache_dir unet_cache \

--output_dir unet_output \

--aekl_ckpt ae_output/autoencoder-ep-XXX.pth \

--diff_ckpt unet_output/unet-ep-XXX.pthAuxiliary models

Follow the commands below to train the DCM auxiliary model.

# Create an output directory

mkdir aux_outputRun the training script

python scripts/training/train_aux.py \

--dataset_csv /path/to/A.csv \

--output_path aux_outputWe emphasize that any disease progression model capable of predicting volumetric changes over time is also viable as an auxiliary model for BrLP.

Inference

Our package comes with a brlp command to use BrLP for inference. Check:

brlp --help--input parameter requires a CSV file where you list all available data for your subjects. For an example, check to examples/input.example.csv. If you haven't segmented your input scans, brlp can perform this task for you using SynthSeg, but it requires that FreeSurfer >= 7.4 be installed. The --confs parameter specifies the paths to the models and other inference parameters, such as LAS $m$. For an example, check examples/confs.example.yaml. Running the program looks like this:

Pretrained models

Download the pre-trained models for BrLP:

| Model | Weights URL | | ---------------------- | ------------------------------------------------------------ | | Autoencoder | link | | Diffusion Model UNet | link | | ControlNet | link | | Auxiliary Models (DCM) | link |

Acknowledgements

We thank the maintainers of open-source libraries for their contributions to accelerating the research process, with a special mention of MONAI and its GenerativeModels extension.

Citing

Medical Image Analysis:

@article{puglisi2025brain,

title={Brain latent progression: Individual-based spatiotemporal disease progression on 3D brain MRIs via latent diffusion},

author={Puglisi, Lemuel and Alexander, Daniel C and Rav{\\i}, Daniele},

journal={Medical Image Analysis},

year={2025}

}MICCAI 2024 proceedings:

@inproceedings{puglisi2024enhancing,

title={Enhancing spatiotemporal disease progression models via latent diffusion and prior knowledge},

author={Puglisi, Lemuel and Alexander, Daniel C and Rav{\\i}, Daniele},

booktitle={International Conference on Medical Image Computing and Computer-Assisted Intervention},

pages={173--183},

year={2024},

organization={Springer}

}@inproceedings{mcmaster2025technical,

title={A technical assessment of latent diffusion for Alzheimer's disease progression},

author={McMaster, Elyssa and Puglisi, Lemuel and Gao, Chenyu and Krishnan, Aravind R and Saunders, Adam M and Ravi, Daniele and Beason-Held, Lori L and Resnick, Susan M and Zuo, Lianrui and Moyer, Daniel and others},

booktitle={Medical Imaging 2025: Image Processing},

volume={13406},

pages={505--513},

year={2025},

organization={SPIE}

}--- Tranlated By Open Ai Tx | Last indexed: 2026-01-20 ---